Cloud that can think

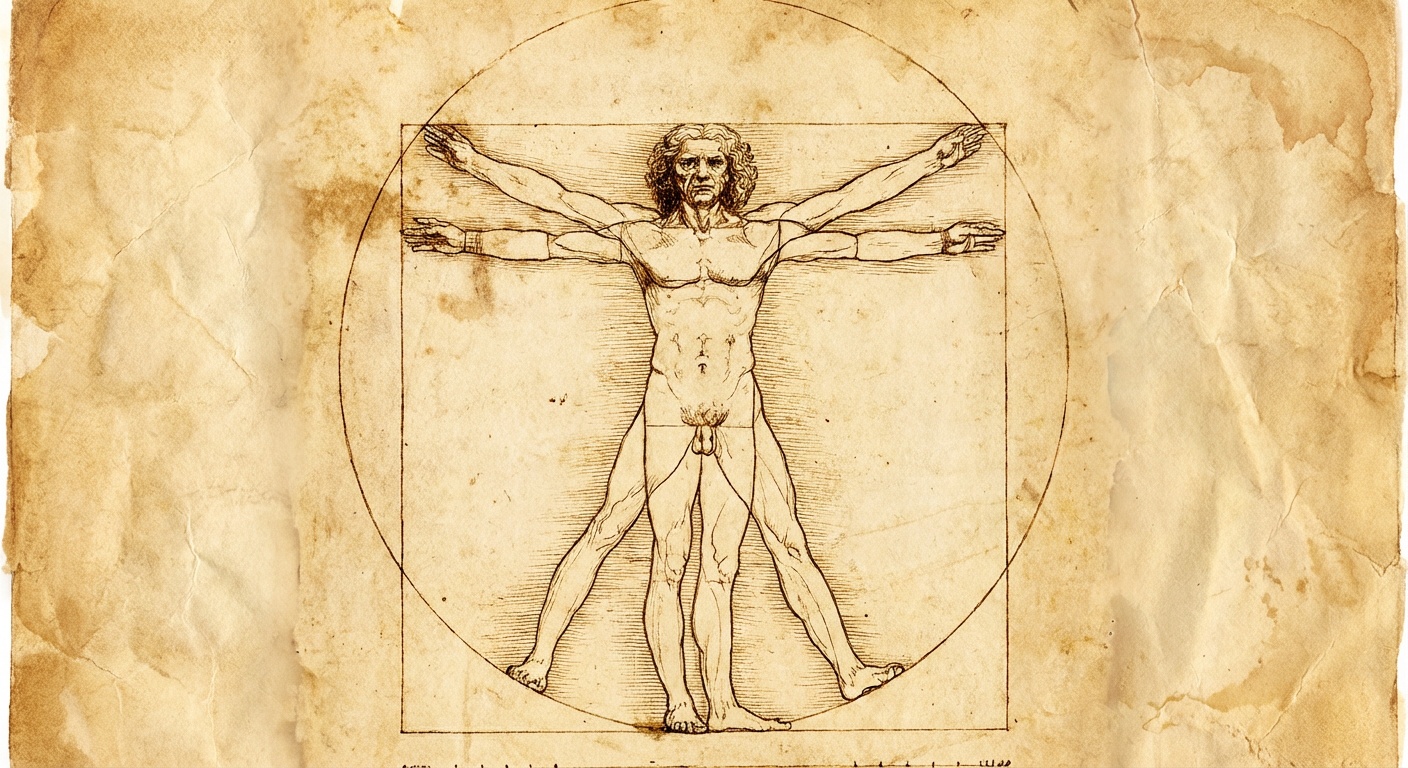

The all-in-one cloud for AI agents. Every model, every MCP tool, one safe serverless runtime.

The all-in-one cloud for AI agents. Every model, every MCP tool, one safe serverless runtime.

Describe what it should do in plain prose — the way you'd brief a new hire. No SDK, no YAML, no graphs.

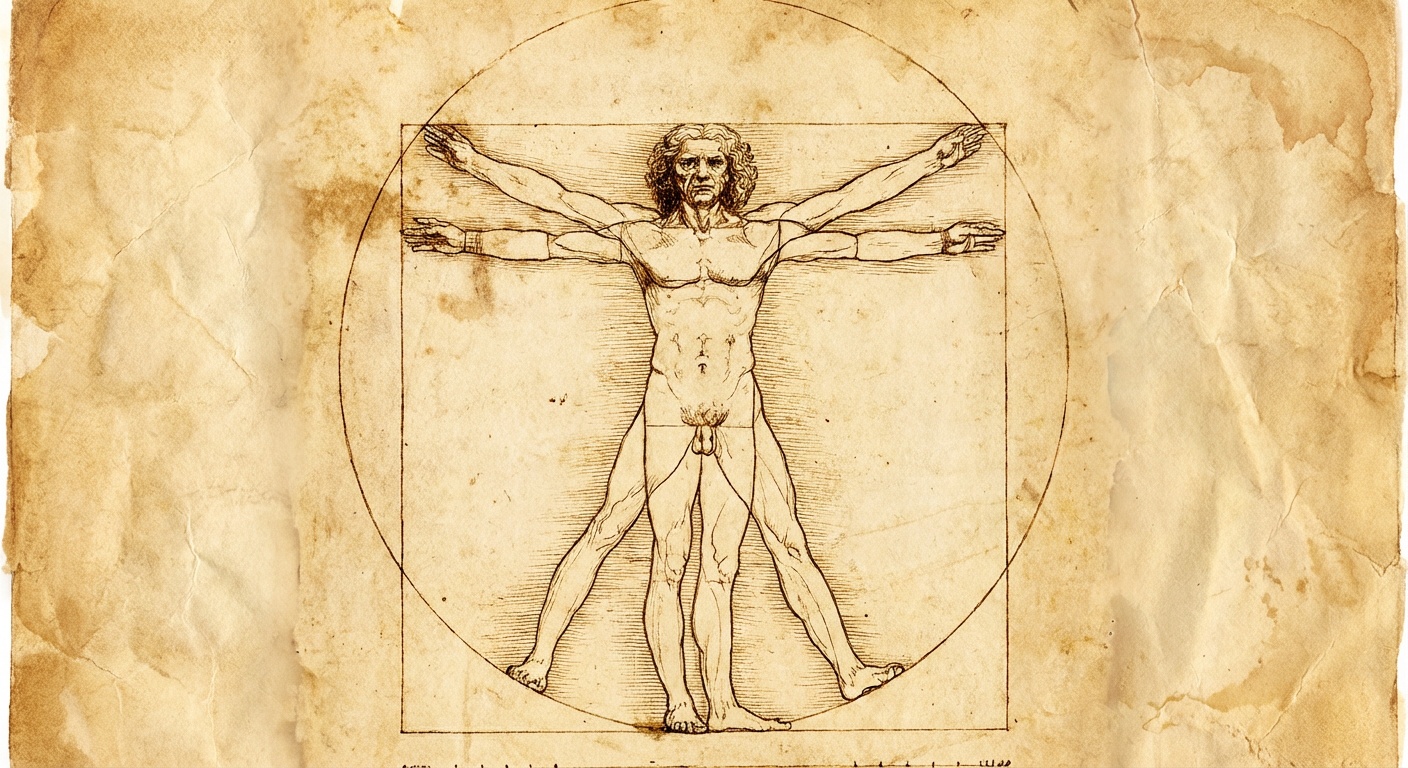

Any MCP, any model — connected through an encrypted vault. Keys never reach the LLM, every run is sandboxed.

One click to production. Auto-scale from zero, live traces, cost caps — and the agent reasons through each step on its own.

Open frameworks leak API keys into prompts and run tools on your host. FlyMy.AI keeps credentials in a vault, isolates every run, and never lets your LLM touch your secrets.

01

01

API keys and OAuth tokens live in an encrypted vault. Never injected into prompts, never written to logs.

02

02

Each agent run executes in its own isolated container. Filesystem, network, and memory boundaries by default.

03

03

Every tool gets the minimum permissions it needs. Audit every call, revoke in one click.

MCP tool calls for agentic workflows. Frozen graphs for real-time streaming. Same SDK, same infra, with GPU and CPU clusters that scale from a chatbot to a robot brain.

Describe what you need in a prompt. The agent picks models, calls tools, reasons through steps, and delivers the result. One run, and the whole cycle happens automatically.

Gmail, Slack, GitHub, HubSpot, Notion, Jira, 50+ MCP tools with managed OAuth out of the box. Describe the workflow in a prompt. The agent figures out which tools to call and when.

Define a pipeline of tools (ASR, LLM, TTS), describe the graph in a prompt, freeze it. The frozen graph scales as a single streaming unit. Sub-200ms, 40+ languages.

Freeze a voice graph with ASR, LLM, TTS, and CRM tools. The agent handles calls in real-time, reads CRM history, resolves issues, escalates when needed. Scales to thousands.

Freeze a VLA pipeline: YOLO spots the target, VLM understands the scene, and a Vision-Language-Action model outputs joint trajectories for the robot arm directly. No manual motion planning.

Serverless GPU fleet built for real-time AI agents. Sub-second cold starts, auto-scaling from zero to thousands, and pay-per-second pricing, so your agents think fast and your invoices stay small.

No prompt engineering. No chain management. No tool wiring. Just describe what you need, and FlyMy.AI handles the reasoning.

100+ models, 50+ MCP tools, and growing. One API key to access the entire AI stack.

MCP agents reason step-by-step, calling 50+ MCP tools as needed. Frozen graphs stream at wire speed, same engine, different mode. Watch both work in real time.

Thinking agents, real-time pipelines, frozen graphs. Start building with FlyMy.AI today. Free during beta.